Every action on the record.

Tied to a real identity.

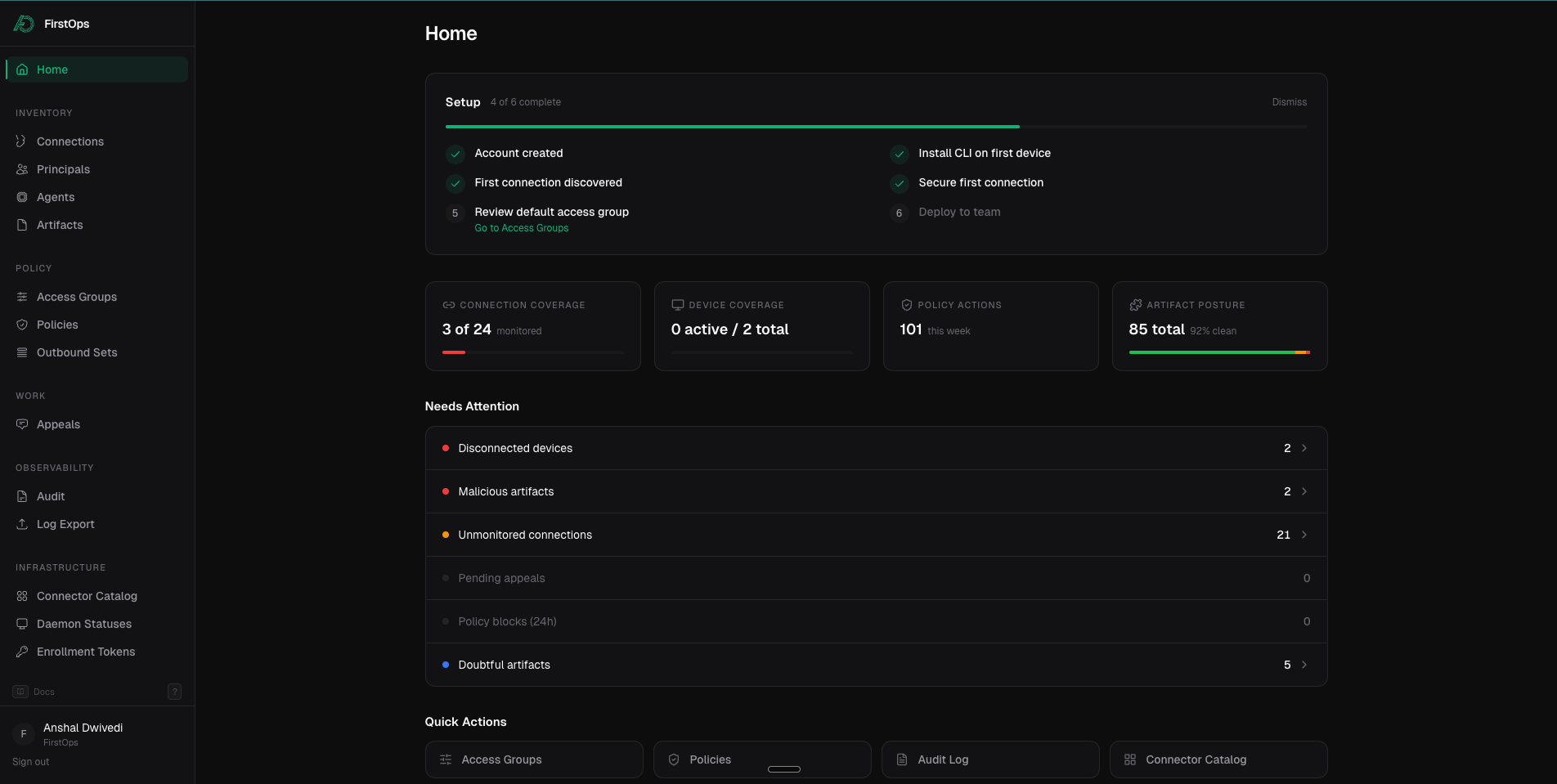

Each surface gets its own console, all wired to the same policy engine and the same audit log.

Every agent connection, routed through one place.

MCP is how agents reach Notion, GitHub, Postgres, Stripe. We put a gateway in the middle. Credentials are brokered per request, so agents never see tokens. Your security team gets one list of every agent-to-system connection in the org.

- ◆Auto-discovers every MCP server already wired up on the machine

- ◆Per-call policy, scoped to a real principal

Every agent connection, routed through one place.

MCP is how agents reach Notion, GitHub, Postgres, Stripe. We put a gateway in the middle. Credentials are brokered per request, so agents never see tokens. Your security team gets one list of every agent-to-system connection in the org.

- ◆Auto-discovers every MCP server already wired up on the machine

- ◆Per-call policy, scoped to a real principal

Every tool an agent invokes, inspected before it runs.

Read a file. Run a shell. Push to git. Install a package. Every one is a tool call, and every one can be the moment something goes wrong. We see every one, and we can stop the ones that shouldn't happen.

- ◆Zero agent code changes

- ◆Full session trace per principal

Prompts and completions, scoped to a real identity.

The prompt is a database query now. It leaks PII, embeds credentials, carries customer names. We see every LLM call: tied to a real human principal, scrubbed on the way out, inspected on the way back.

- ◆Per-principal prompt + completion audit

- ◆Cost, latency, tokens, tied to one session trace

Skills are the new npm packages.

A skill is a few hundred lines of instructions that silently expand an agent's capabilities. A subagent gets invoked without your approval. Most teams have no idea what's loaded in their agents' context. We classify every artifact (hash, prevalence, verdict) across your tenant.

- ◆Hash-based lineage across every skill and subagent

- ◆Prevalence per artifact: by agent, by machine, by team

One policy engine sits in front of the entire runtime.

One engine decides. One log records. Verdict-chasing becomes one query. Audit prep becomes one export, not a week of stitching logs.

Your security team already knows what's missing.

They can't tell you which agents are connected to what. They can't tell you which tools those agents can call. They can't point an auditor at a log that maps every agent action to a real person. Here's what they told us.

Ships with your MDM. Zero developer action.

Ship the FirstOps package through JumpCloud, Jamf, or Intune. Every coding agent on the device — Claude Code, Cursor, Windsurf, Cline, Aider — is covered on first boot. Nothing to set up, nothing to patch, no code change. Autonomous agents you build take a small SDK and write to the same audit log.

- ✓Deploys via JumpCloud, Jamf, Intune, Kandji — zero developer action

- ✓Small SDK for autonomous agents built on any harness

- ✓Auto-discovers MCP servers already wired up per machine

- ✓Policy evaluated locally, hot-reload in < 1s

Say yes to agents in production.

With a security team that can.

Deploys through your MDM. See your agent runtime before your security team asks about it.